1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

|

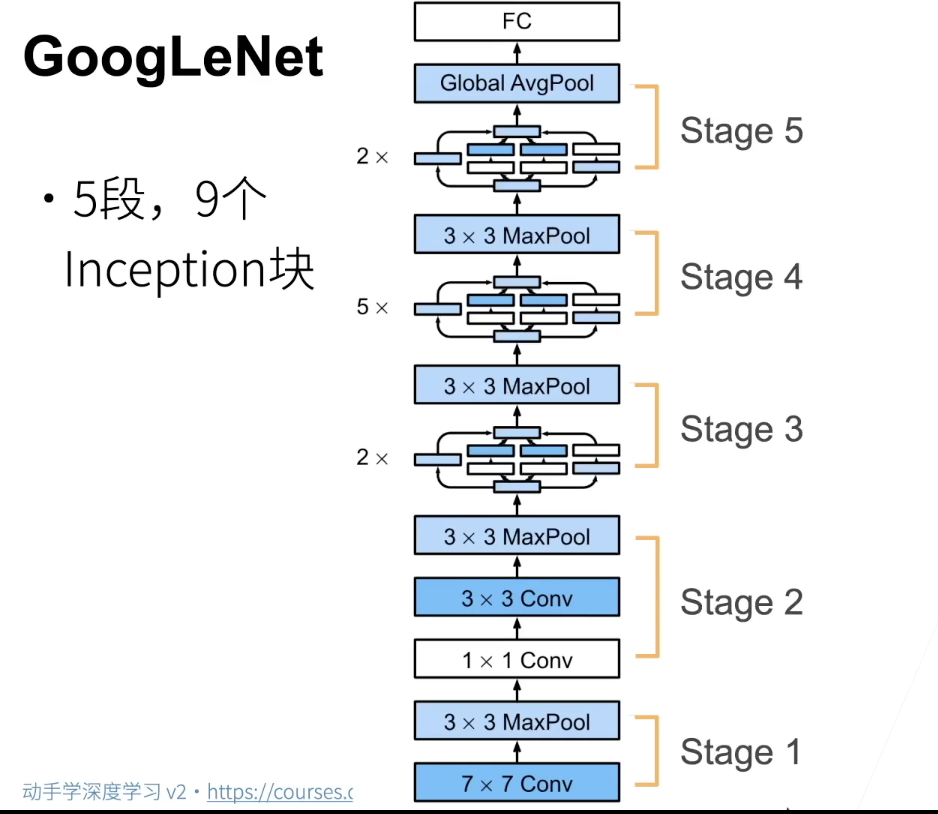

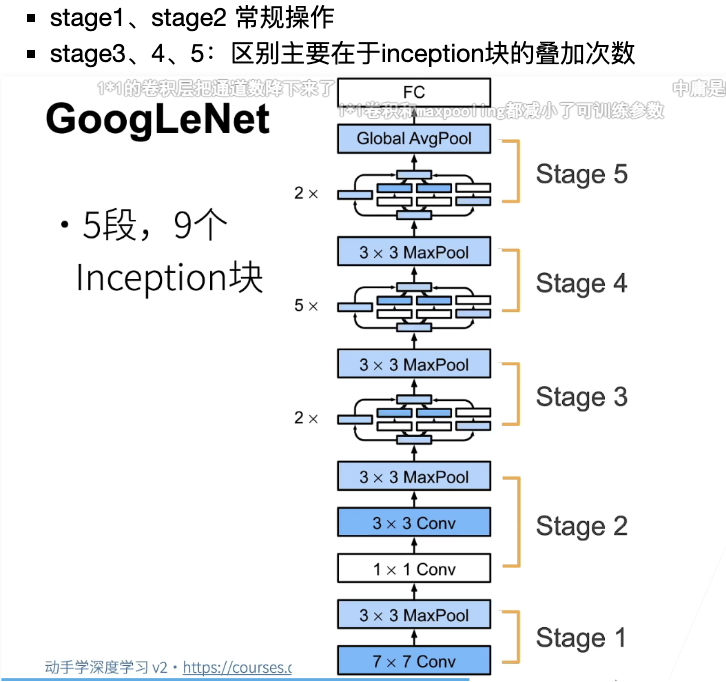

# 接下来我们自己动手实现这个网络

# 第⼀个模块使⽤64个通道,7X7卷积层

b1 = nn.Sequential(

nn.Conv2d(1,64,kernel_size=7,padding=3,stride=2),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3,padding=1,stride=2)

)

b2 = nn.Sequential(

nn.Conv2d(64,64,kernel_size=1),

nn.ReLU(),

nn.Conv2d(64,192,kernel_size=3,padding=1),

nn.MaxPool2d(kernel_size=3,padding=1,stride=2)

)

# stage3开始就要使用inception块了

b3 = nn.Sequential(

# 输入192 输出 64 + 128 + 32 + 32 = 256

Inception(in_channels= 192,c1 = 64,c2=(96,128),c3=(16,32),c4=32),

# 输出为 128 + 192+ 96+ 64 = 480

Inception(256, 128, (128, 192), (32, 96), 64),

nn.MaxPool2d(kernel_size=3,padding=1,stride=2)

)

# stage4 stage3能写出来,4就照样做就可以了

b4 = nn.Sequential(

Inception(480, 192, (96, 208), (16, 48), 64),

Inception(512, 160, (112, 224), (24, 64), 64),

Inception(512, 128, (128, 256), (24, 64), 64),

Inception(512, 112, (144, 288), (32, 64), 64),

Inception(528, 256, (160, 320), (32, 128), 128),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

# stage5是输出层

b5 = nn.Sequential(

Inception(832, 256, (160, 320), (32, 128), 128),

Inception(832, 384, (192, 384), (48, 128), 128),

# 自适应平均池化层, 能够自动选择步幅和填充,比较方便

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten()

)

# 这里b1-b5都是nn.Sequential,但是好像可以直接嵌套进新的nn.Sequential

net = nn.Sequential(b1, b2, b3, b4, b5, nn.Linear(1024, 10))

# 这里输入不是224X224,改为96X96,加快训练速度

X = torch.rand(size=(1, 1, 96, 96))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

lr, num_epochs, batch_size = 0.1, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

|

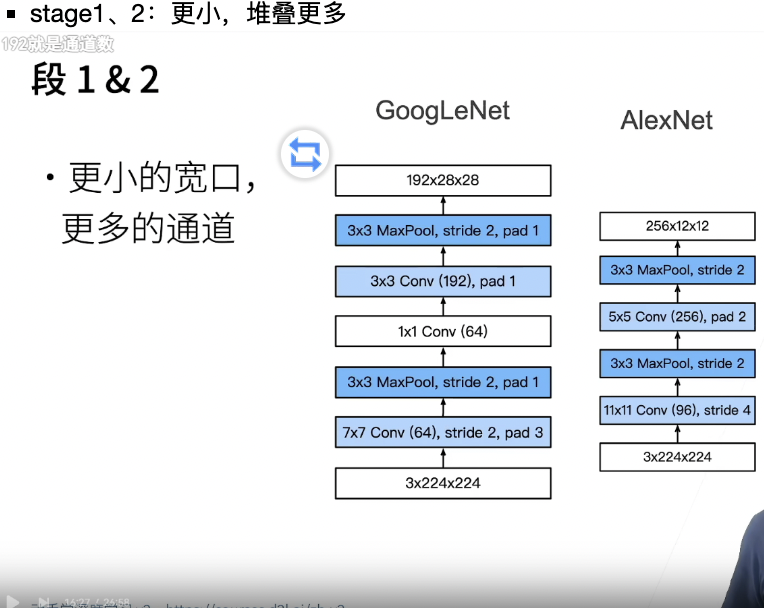

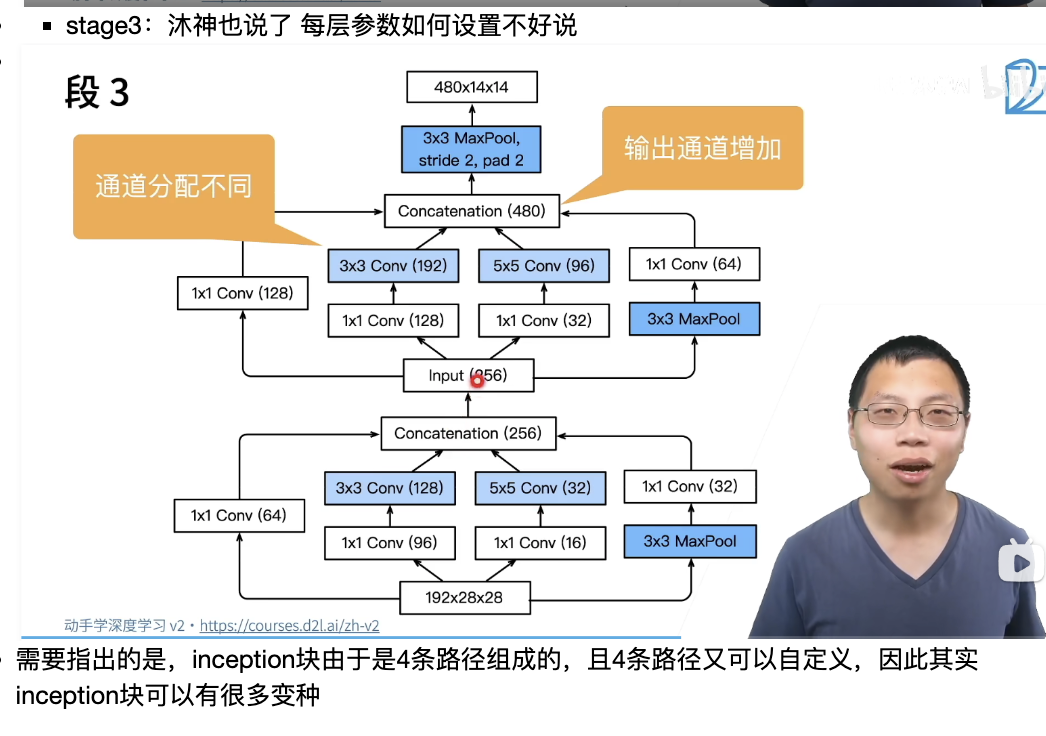

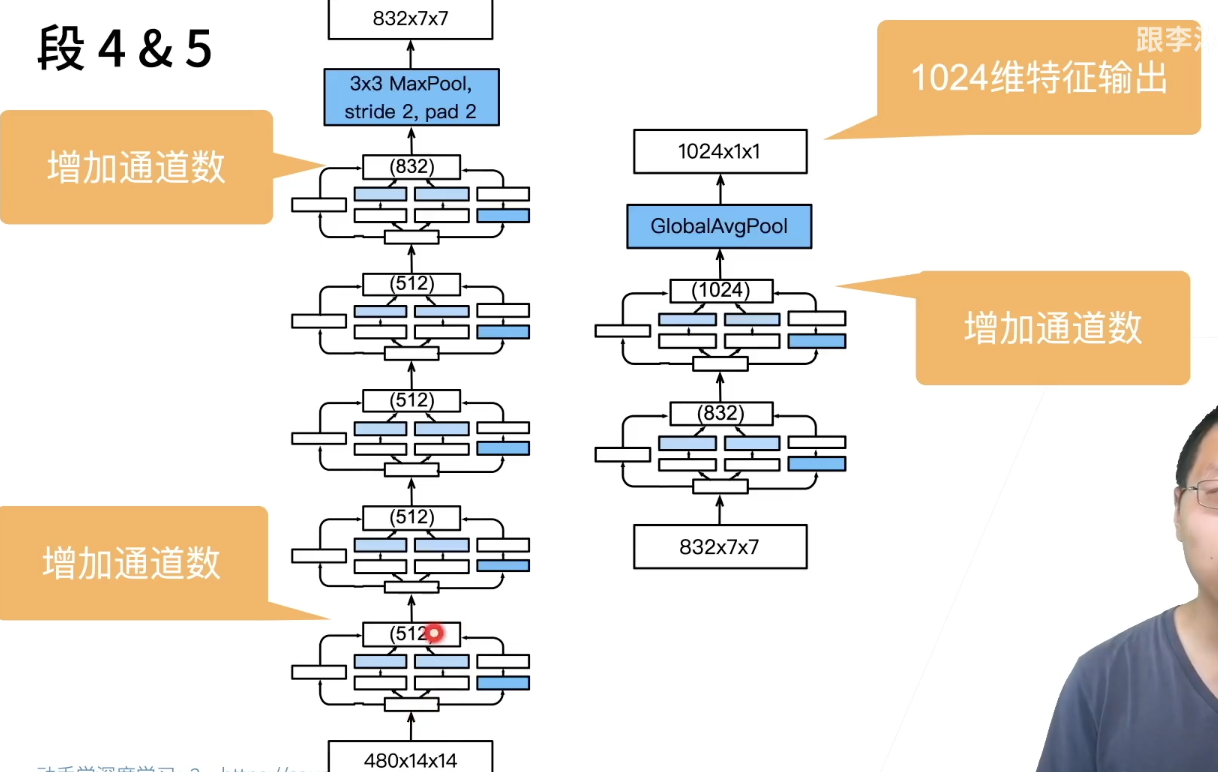

下面是详细讨论每个stage:

下面是详细讨论每个stage: